Hollywood's Generative AI Future

Digital Marketing News: Advertising, AI, Consumer Psychology, Search, Social Media, Society, Music Monday & Glorious Midjourney Mistakes.

While Barbenheimer was killing it at the box office this past weekend, the writers and actors who create our entertainment were on strike.

The Writers Guild of America (WGA) and the Screen Actors Guild - American Federation of Television and Radio Artists (SAG-AFTRA) want contracts that prevent an AI from replacing them.

The Verge reports:

When asked about the proposal during the press conference, Crabtree-Ireland [SAG-AFTRA’s chief negotiator] said that “This ‘groundbreaking’ AI proposal that they gave us yesterday, they proposed that our background performers should be able to be scanned, get one day’s pay, and their companies should own that scan, their image, their likeness and should be able to use it for the rest of eternity on any project they want, with no consent and no compensation. So if you think that’s a groundbreaking proposal, I suggest you think again.”

I remember the last time the writers went on strike; it marked the end of Deadwood, one of my favorite HBO series. Projects that are currently in production include the movie Deadpool 3 and TV shows Stranger Things and The Last of Us.

This is a trend I’ve been tracking for years. The estate of 50s-era actor James Dean leased the rights to the Rebel Without A Cause star for casting in a new movie.

Generative AI will only accelerate this trend of actors’, musicians’, and even writers’ estates earning bank in perpetuity. So, writers and actors are justified in protecting the rights to their identities.

The studios have every incentive to cash in on the limitless revenue possibilities that generative AI presents. If you can capture an actor’s likeness and voice and then spin them up into any story line without bothering to pay them for it, I mean, why not, right?

Strap in, because this is going to be a long one. The unions are fighting over existential issues.

The flip side of this is that the job title of Actor may have an expiration date.

It is currently possible to generate imagery for characters using visual tools like Midjourney, which can also created 3D renderings. Generative AI audio tools can create voices for those characters and sound effects and soundtracks for a movie. While generative AI video tools are still fairly primitive, they’ll improve. Even so, you could throw all these assets into a video game engine and if you’ve got a good script and a clever imagination, there’s no reason someone with the time and drive to create their own movie couldn’t do so.

There will come a time when a blockbuster movie will have been created by some kid sitting in a basement somewhere in the world.

Digital Marketing

Advertising

Content Marketing Institute - Beware: Automated AI-Generated Content Can Ruin Everything for Marketers - …an unfortunate and inevitable report came out from NewsGuard, a company that rates the credibility of information websites. It found some of the world’s largest blue-chip brands unintentionally support the spread of unreliable AI-generated news websites.

It identified over 140 brands spending programmatic advertising dollars on AI-generated news sites with little or no human management. And the number of those outlets known as UAINs – unreliable artificial-intelligence-generated news sites – increases exponentially. In one month this year, they jumped from 49 to 217.

This is the first I’ve seen of anyone quantifying generative AI crap content, which I discussed in more depth here:

CNBC - How the generative A.I. boom could forever change online advertising - As these new offerings improve over time, a bicycle company, for example, could theoretically target Facebook users in Utah by showing AI-generated graphics of people cycling through desert canyons, while users in San Francisco could be shown cyclists cruising over the Golden Gate Bridge, ad experts predict. The text of the ad could be tailored based on the person’s age and interests.

Artificial Intelligence

One Useful Thing - What AI can do with a toolbox... Getting started with Code Interpreter - You don’t have to code, because it does all the work for you. All the major LLMs write code, but you have to run and debug it yourself, even though the AI helps. For people who never really used Python before (like myself) this was annoying, and involved going back and forth with the AI to correct errors. Now, the AI corrects it own errors and gives you the output.

I’m having a lot of fun experimenting with ChatGPT’s Code Interpreter but use it with a big caveat: It’s important to understand the basic technology underlying these tools and with Code Interpreter in particular, it’s helpful to have some basic knowledge of programming.

CNBC - Bill Gates says A.I. could kill Google Search and Amazon as we know them - The future top company in artificial intelligence will likely have created a personal digital agent that can perform certain tasks for people.

The technology will be so profound, it could radically alter user behaviors. “Whoever wins the personal agent, that’s the big thing, because you will never go to a search site again, you will never go to a productivity site, you’ll never go to Amazon again,” he said.

It is possible that a personal digital assisant-centric world spells the end of online destinations for humans but the digital assistants will still need source material. What that source material looks like remains to be seen.

TechCrunch - FTC reportedly looking into OpenAI over ‘reputational harm’ caused by ChatGPT - The FTC is reportedly in at least the exploratory phase of investigating OpenAI over whether the company’s flagship ChatGPT conversational AI made “false, misleading, disparaging or harmful” statements about people. It seems unlikely this will lead to a sudden crackdown, but it shows that the FTC is doing more than warning the AI industry of potential violations.

The Washington Post first reported the news, citing access to a 20-page letter to OpenAI asking for information on complaints about disparagement. The FTC declined to comment, noting that its investigations are nonpublic.

Sound familiar?

Wired - How to Use Generative AI Tools While Still Protecting Your Privacy - Essentially, anything you input into or produce with an AI tool is likely to be used to further refine the AI and then to be used as the developer sees fit. With that in mind—and the constant threat of a data breach that can never be fully ruled out—it pays to be largely circumspect with what you enter into these engines.

A good cautionary note.

9To5Google - Bard 'Extensions' will add Google and third-party services - Google has prepared a way for Bard to directly integrate with the company’s own tools – like Google Maps, Google Flights, and YouTube – as well as third-party services like Instacart, Kayak, OpenTable and Zillow.

Google has joined the plugin party.

KDNuggets - ChatGPT Dethroned: How Claude Became the New AI Leader - The team drafted a Constitution that included the likes of the Universal Declaration of Human Rights, or Apple’s terms of service.

This way, the model not only was taught to predict the next word in a sentence (like any other language model) but it also had to take into account, in each and every response it gave, a Constitution that determined what it could say or not.

Next, instead of humans, the actual AI is in charge of aligning the model, potentially liberating it from human bias.

But the crucial news that Anthropic has released recently isn’t the concept of aligning their models to something humans can tolerate and utilize with AI, but a recent announcement that has turned Claude into the unwavering dominant player in the GenAI war.

Specifically, it has increased its context window from 9,000 tokens to 100,000. An unprecedented improvement that has incomparable implications.

I haven’t had a lot of time to devote to experimenting wiht Claude yet but it does not disappoint as a summarizing tool. I can’t tell you how many abandoned PDFs (well, I probably could but I’m too lazy to count them for you. But trust me, it’s a lot) I have in my downloads folder that I had every intention of reading but never got around to because they were tl;dr.

The Verge - The biggest AI release since ChatGPT - Many believe that [Meta’s] Llama 2 is the industry’s most important release since ChatGPT last November, though it obviously won’t generate as much press buzz as a developer-facing release. Companies will now be able to more easily and cheaply build bespoke bots with proprietary data that would never be accessible externally, like the internal AI bot that Stripe recently rolled out for its employees. This will make AI chatbots of all kinds more useful and personalized, which is an exciting step in the right direction.

But as always, and especially with the new release by Meta, the devil is in the details. Llama 2 may be the most freely accessible model of its caliber. But its licensing restrictions mean that it’s not technically “open source,” even if Meta wants the world to believe it is.

This is where much of the value and innovation will come from generative AI. Being able to sandbox AI within your organization and train it on your own data sets will unlock a tremendous amount of value from organizational assets that have been dormant.

The Verge - Apple is already using its chatbot for internal work - Apple is using an internal chatbot to help its employees “prototype future features, summarize text and answer questions based on data it has been trained with,” says Bloomberg’s Mark Gurman in Power On today.

Apple hasn’t been sure what it wants to do with its Apple GPT chatbot project on the customer-facing side yet, but Gurman’s report shed some light on at least its internal chatbot uses. According to the newsletter, Apple is looking at ways to expand the use of generative AI within its organization, with one possibility being giving the tool to its AppleCare support staff to better help customers dealing with issues.

What did I just say?

Consumer Psychology

Neuroscience News - Intentions Matter: How Source Intent Influences Perceptions of Truth - Psychologists revealed people’s judgments of truthfulness are influenced by what they perceive as the information source’s intentions.

They found that even when individuals knew the factual accuracy of a claim, their judgment of its truth was affected by whether they thought the source was trying to deceive or inform them. This tendency held true for both politicized and non-politicized topics.

I do a lot of public affairs work where the issues we need to communicate typically are politicized. That’s why surveys like Gallup’s institutional confidence poll are so frustrating; they shed no light on why one group or another lacks trust in a given institution. I mean, you can make a lot of assumptions based on the respondent group. One can, for instance, have little confidence in a given institution’s ability to solve a particular problem yet still trust or have faith in that institution.

It’s important to understand the subtle perceptions of reputations if you are to effectively communicate with a given target audience.

PsyPost - New psychology research indicates that social rigidity is a key predictor of cognitive rigidity - Recent research found a strong connection between social rigidity and cognitive rigidity, suggesting that inflexible thinking in one area tends to be associated with inflexible thinking in another. The study, published in Psychological Research, provides evidence that people who embrace rigid political and social attitudes tend to perform worse on tests of problem-solving abilities.

Well, this is discouraging.

Search

Search Engine Journal - WordPress 6.3 Will Improve LCP SEO Performance - Largest Contentful Paint (LCP) is a metric that measures how long it takes to render the largest image or text block. The underlying premise of this metric is to reveal a user’s perception of how long it takes to load a webpage.

What’s being measured is what the site visitor sees in their browser, which is called the viewport.

The optimizations achieved by WordPress in 6.3 achieve a longstanding effort to precisely use HTML attributes on specific elements to achieve the best Core Web Vitals performance.

Can we stop with programmers naming metrics already?!? Largest Contentful Paint? What the hell does that mean?

Yeah, I know, it is defined in my excerpt but still.

Despite my annoyance with language insufficiencies, LCP is an important reputational metric which I’ll get into in greater depth later in Chapter 2 on website user experience.

Washington Post - Web Performance and SEO Guidelines now available from The Washington Post - The Washington Post today announced that Web Performance and SEO Best Practices and Guidelines are now available on The Post’s site. Following the release of The Post’s accessibility guidelines, these new guidelines will ensure that the Post is providing a positive user experience, increasing website visibility, driving organic traffic, and ultimately, improving the site's overall success. As part of The Post's open-source design system, these guidelines are also available for use by anyone on the web to ensure best practices for web performance and SEO are met.

When an organization as significant as the Washington Post publishes such best practices, it would be wise to pay attention.

Social Media

Scott Galloway - Threadzilla - We’re not only witnessing the unraveling of a firm, but a person. I have written about mens’ need for guardrails. These can take several forms — an office, a girlfriend, regulation, a board. The erosion of Musk’s guardrails as money and sycophants melt whatever better judgment or grace he had has resulted in a reputation experiencing the same trajectory as Twitter’s revenue. If Elon had never downloaded the micro-blogging app he’d be much wealthier and universally revered for his formidable accomplishments. Instead, he’s set a land speed record for hero to villain.

This echos some of my sentiments I discussed in February regarding Silicon Valley libertarianism.

Ars Technica - Fear, loathing, and excitement as Threads adopts open standard used by Mastodon - Days after Meta launched its new app, Threads, this month, a software engineer at the company named Ben Savage introduced himself to a developer group at the World Wide Web Consortium, a web standards body. The group, which maintains a protocol for connecting social networks called ActivityPub, had been preparing for this moment for months, ever since rumors first emerged that Meta planned to join the standard. Now, that moment had arrived. “I'm really interested to see how this interoperable future plays out!” he wrote.

Warm replies to Savage’s email filtered in. And then came another response:

“The company you work for does disgusting things among others. It harms relationships and isolates people. It builds walls and lures people into them. When that doesn't suffice, brutal peer pressure does … That said, welcome to the list, Ben.”

This is kind of an amusing article in a geeky sort of way but the idea of Meta supporting the Fediverse is an intriguiing one. If it follows through on this initial move and commits to integrating ActivityPub, it could fundamentally reshape the entire social net.

Society

Politico - One senator’s big idea for AI - There is an exception to the legislative inertia: Sen. Gary Peters (D-Mich.).

While not a headline name in the broader conversation about AI in Washington, Peters — who chairs the Senate Committee on Homeland Security and Governmental Affairs — pushed several AI bills through Congress in the years preceding this spring’s sudden hype cycle. He’s already sent two AI bills to the Senate floor this year. And last week Peters quietly introduced a third bill, the AI LEAD Act, which is scheduled for a markup on Wednesday.

His bills focus exclusively on the federal government, setting rules for AI training, transparency and how agencies buy AI-driven systems. Though narrower than the plans of Schumer and others, they also face less friction and uncertainty — and may offer the simplest way for Congress to shape the emerging industry, particularly when it’s not clear what other leverage Washington has.

This is a really smart approach to actually get some sort of regulatory regime in place that could mean something. Do not underestimate the power of the federal government’s purse to affect change.

The federal government already has plenty of AI contractors on the books (think Palantir), imposing regulations as a requirement of doing business with the government will definitely shape the behavior of contractors and their subcontractors.

USA Today - More Millennial, Gen Z women are finding interest in ‘trad wife’ lifestyles. What to know. - The “trad wives,” or a traditional wife lifestyle gained popularity on social media. Now more young women are exploring the alternative lifestyle.

Are you kidding me?!? What the actual F? Here’s an example of the adoption of an aesthetic devoid of its context or history. It kinda feels like the “trad wife” adherent featured in this piece has just discovered that context and history and is struggling to justify the trend.

Music Monday

This song is as relevant today as when it was released. Different problems, same issue.

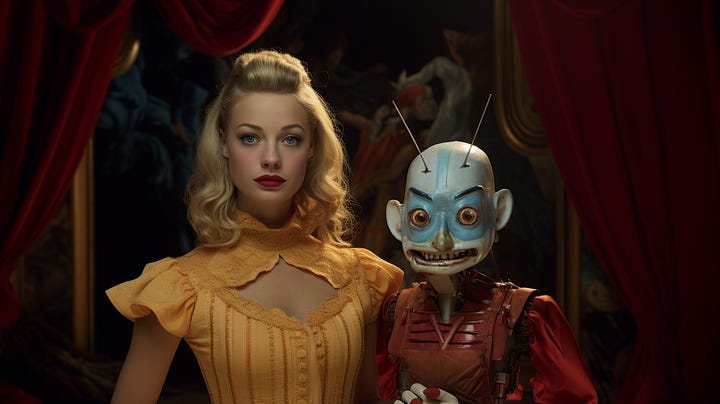

Glorious Midjourney Mistakes